Abstract

A Chosen-Plaintext Attack (CPA) is a cryptographic analysis game for encryption, where an adversary queries an encryption oracle with plaintexts and observes the mapping to their ciphertexts. At an arbitrary time, it provides two challenge plaintexts but receives only one ciphertext, and finally guesses which of the two challenge plaintexts has been encrypted. Neural distinguishers, as a powerful representative of Artificial Intelligence (AI) methods, have been recently used in cryptographic analysis methods. However, they cannot directly be applied to perform CPA due to different input requirements and objectives. This work aims to address this gap. We provide the first rigorous and systematic formulation of CPA from a deep learning perspective. Specifically, we introduce NeuralCPA, a novel deep neural network-based method designed for the evaluation of block cipher CPA security as an initial effort for AI-based CPA analysis. We empirically validate its effectiveness across a diverse range of block ciphers, including SIMON, SPECK, LEA, HIGHT, XTEA, TEA, PRESENT, AES, and KATAN. Our experimental results confirm that NeuralCPA consistently achieves significant distinguishing advantages in round-reduced settings. Notably, our attack success rate ranges from 51% to 76.4%.

Overview

Problem.

In the CPA game, two plaintexts and are given. A hidden bit selects one of them, and is encrypted by to produce the challenge ciphertext . The goal is to guess .

Idea.

NeuralCPA reformulates this problem as a supervised learning task by leveraging the distinguishing capability of neural networks.

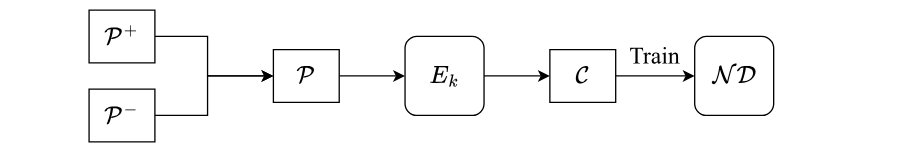

The overall workflow of NeuralCPA is shown below.

1. Sample positive pairs (satisfying the preset input difference ) and negative pairs (not satisfying ) and merge into the plaintext pair set .

2. Encrypt all pairs in using the block cipher to obtain the ciphertext pairs .

3. Train a neural distinguisher on to predict whether the corresponding plaintext pair satisfies .

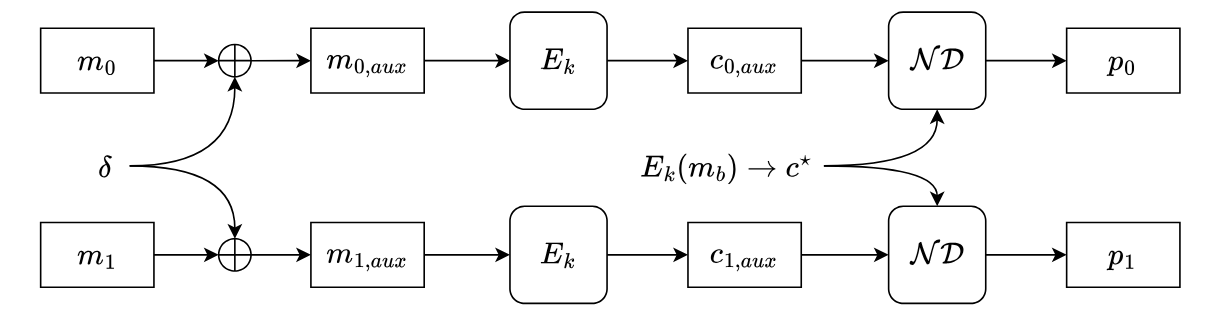

1. Construct auxiliary plaintexts as , .

2. Encrypt the auxiliary plaintexts using to obtain .

3. Use the trained distinguisher to compute a score for each ciphertext pair .

4. Output the final guess .

Methodology

When mapped to the standard CPA experiment, NeuralCPA operates in three stages:

(i) Training the neural distinguisher during the pre-challenge phase.

(ii) Construct auxiliary ciphertext pairs using the input difference in the post-challenge phase.

(iii) Choose the plaintext by comparing auxiliary scores in the guess phase.

The formal definition is given below, with the steps introduced by our method highlighted.

Require: Encryption scheme $ \Pi $, message space $ \mathcal{M} $, key space $ \mathcal{K} $.

Ensure: Experiment outcome (1 if the adversary $ \mathcal{A} $ wins, 0 otherwise).

Initialization: The challenger $ \mathcal{C} $ samples a secret key $ k \xleftarrow{\$} \mathcal{K} $ and gives $ \mathcal{A} $ access to the encryption oracle $ E_k(\cdot) $. $ \mathcal{A} $ chooses a training data size $ n $ and a specific input difference $ \delta $.

Results

We evaluate NeuralCPA across multiple round-reduced ciphers. The table reports the distinguishing Accuracy (Acc.) and CPA Success Rate (CPA SR.), each evaluated on samples with plaintexts disjoint from the training set. The results support the effectiveness of the proposed approach.

| Cipher | Full Rounds | Rounds | Acc. | CPA SR. |

|---|---|---|---|---|

| SIMON32/64 | 32 | 9 | 0.792 | 0.884 |

| *10 | 0.570 | 0.598 | ||

| *11 | 0.522 | 0.531 | ||

| SIMON64/128 | 44 | 11 | 0.640 | 0.693 |

| 12 | 0.537 | 0.552 | ||

| *13 | 0.509 | 0.514 | ||

| SIMON128/256 | 68 | 17 | 0.603 | 0.640 |

| 18 | 0.538 | 0.552 | ||

| *19 | 0.510 | 0.514 | ||

| SPECK32/64 | 22 | 6 | 0.895 | 0.954 |

| 7 | 0.677 | 0.733 | ||

| *8 | 0.527 | 0.530 | ||

| SPECK64/128 | 27 | 7 | 0.821 | 0.901 |

| *8 | 0.586 | 0.610 | ||

| SPECK128/256 | 34 | 9 | 0.943 | 0.981 |

| *10 | 0.678 | 0.725 | ||

| LEA-128 | 24 | 10 | 0.656 | 0.694 |

| 11 | 0.524 | 0.534 | ||

| HIGHT | 32 | 10 | 0.751 | 0.764 |

| XTEA | 64 | 4 | 0.992 | 0.993 |

| *5 | 0.575 | 0.606 | ||

| TEA | 64 | 4 | 0.987 | 0.991 |

| *5 | 0.602 | 0.641 | ||

| PRESENT-80 | 31 | 7 | 0.819 | 0.891 |

| 8 | 0.609 | 0.687 | ||

| *9 | 0.506 | 0.510 | ||

| AES-128 | 10 | 2 | 1.000 | 1.000 |

| 3 | 0.518 | 0.524 | ||

| KATAN32 | 254 | *55 | 0.648 | 0.705 |

| *60 | 0.558 | 0.579 | ||

| *65 | 0.506 | 0.511 | ||

| CHACHA20 | 20 | 3 | 0.640 | 0.693 |

Notes. Rounds marked with * use a distinguisher pretrained on the previous round (or iteration, e.g., 5-round steps for KATAN) and then fine-tuned.

Contributions

- Deep learning formulation of CPA. We present the first rigorous and systematic formulation of CPA from a deep learning perspective, casting the CPA experiment as a supervised learning task.

- Analysis for block ciphers. We introduce NeuralCPA, a neural network–based framework for evaluating the CPA security of block ciphers and empirically demonstrate its effectiveness.

- Extensions for multi-instance settings. We show that NeuralCPA can be extended to multi-instance settings, where even a marginal advantage can be ideally amplified.

BibTeX

@misc{cryptoeprint:2026/328, author = {Xuanya Zhu and Liqun Chen and Yangguang Tian and Gaofei Wu and Xiatian Zhu}, title = {{NeuralCPA}: A Deep Learning Perspective on Chosen-Plaintext Attacks}, howpublished = {Cryptology {ePrint} Archive, Paper 2026/328}, year = {2026}, url = {https://eprint.iacr.org/2026/328}}